qPLANT

Project Objective

With the emergence of smart phones and their increasing popularity, we envision a mobile application capable of plant identification from geo-tagged images. The application sends user generated images to the server, and retrieves information about plant taxonomy and habitat. The system uses plant features such as leaf properties, branching structure, and flower attributes, where applicable, as well as location information, to identify the species. The server returns a list of plant species that are closely related based on phenotypical characteristics.

Coal Point Reserve

The University of California (UC) Natural Reserve System (NRS) manages 37 natural areas representing nearly all the State's major ecosystems in as undisturbed a condition as possible. This reserve system protects California's natural heritage for the public trust and provides protected natural areas for research and teaching to contribute to the understanding and wise management of the Earth and its natural systems. The Coal Oil Point Reserve is one of these re- serves and oers many opportunities for public and student education as well as research.

The Coal Oil Point Natural Reserve (COPR) consists of 170 acres of protected coastal habitats along the south coast of Santa Barbara County, adjacent to the West Campus of the UCSB. The diversity of habitats and wildlife at the Reserve is striking and some of these species are now rare along the coast. For example, the COPR beach is breeding habitat for the Pacific coastal population of the threatened Western Snowy Plover and the endangered California Least Terns. The Belding savanna Sparrow breeds on the pickleweed habitat at Devereux slough. The Reserve has one of the most pristine remnants of Coastal Dune Scrub in Santa Barbara County, and contains a number of rare plant species. Several types of wetlands such as vernal pool, dune swale, salt at and salt marsh are part of the 5% remaining coastal wetland in California. In a short walk, visitors can observe all these habitats and learn why it is important to preserve them.

Easy access to the Reserve's great natural beauty provides an opportunity to foster education and connect people to nature. One way to help to engage people with their local environment is by teaching them to recognize the native plant species. This project will provide a cutting edge tool to help people identify plants. The reserve's 3 mile nature trail contains a number of plants from the coastal scrub community, the most important vegetation, and the one with the fastest decline in coastal California. People love what they know and protect what they love. Thus, by learning the local ora, people will help preserve it.

Plant Identification Challenges

The difficulty in plant identification comes from the fact that all elements of the plant (flower, leaf, branching structure, etc) give clues that need to be examined in order to identify the plant. Therefore, our system cannot be limited to leaves, like other plant identification systems already existent. Furthermore, the variety of types of leaves and flowers as well as complicated 3-d geometry of the branching structure of leaves and petals poses difficulty. Our system aims at addresses these challenges and taking advantage of as many plant features as possible, 2-d as well as 3-d.

Data Collection Process

During the summer of 2010 we started a systematic data collection process (one undergraduate research intern and a graduate student mentor have been involved in this work, supervised by Professor Manjunath and the Director of the COPR). We are collecting data along the trail mentioned in above. Also, the appearance of plants varies greatly throughout the year, so our efort of data collection will be ongoing work.

Throughout the data collection process, we consider the variety in features from plant to plant as well as leaf to leaf on the same plant, but we assume that the query images only contain mature healthy leaves, and healthy fully blossomed flowers. Also, we do not constrain the user image the plant on a white background, but we do expect a uniform background. We do not consider trees at this point.

As mentioned above, we aim at extracting 2D features, such as leaf geometry, venation structure, as well as 3D features, such as shape of the leaf and branching structure. Ideally, the user should be able to take several pictures from different angles in order to be able to extract the 3D structure. We also consider the possibility of providing the user with a wallet-sized calibration pattern which should be captured in the query images.

We collect data in the following manner. The camera is calibrated using a checkerboard pattern. Images from multiple angles are required for the computation of 3D features. The procedure for multi-pose imaging is illustrated in the figure below The checkerboard pattern and the color calibration patterns are mounted next to the target. Several images from different angles are taken. The calibration information allows us to estimate the relative position of the two cameras in the two poses. The target can be one of the subjects discussed above: leaf, flower, fruit, branch and whole plant.

Plant Identification System

Building the Database

Images are collected as explained above. The imaged subject can be: leaf, flower, branch, fruit, whole plant, or a combination thereof. The subject can be photographed with one view, or with multiple views, depending on whether 2D or 3D features will be extracted. Every image comes with date/time information and GPS coordinates. The data is stored in the BISQUE system which the VRL maintains.

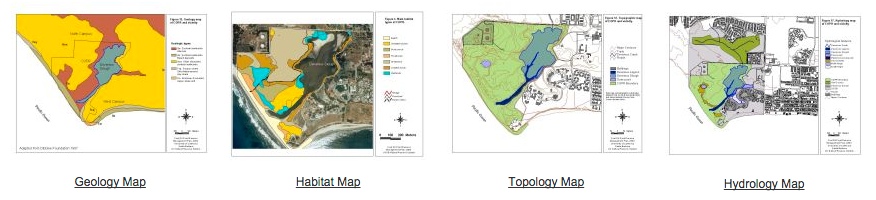

The figure below explains the information that is annotated for every image collected. This information includes, but is not restricted to: genus and species, ecology information (such as habitat, seasonal variations, etc), phenotypical features (age and physical characteristics). Additionally, several maps are available from COPR (geology, habitat, topography and hydrology maps). The information in these maps is extracted using the GPS coordinates and is associated with the plant. Thus, for example, plants belonging to a specific habitat will be marked according to the maps. Also, to aid the image segmentation process, the background type can be specified as natural or artificial (such as a white paper). Features that are later extracted using image processing techniques, are also associated with the image, and can later be retrieved for classification.

User Query System

The figure below shows the system that we envision once the database is complete. The user takes a picture of a portion of the plant with their smart phone. The image is sent to the server, along with information about date, time and GPS coordinates. Additionally the system asks the user to clarify what portion of the plant is visible (leaf, branch, etc). Optional parameters can also be specified to aid the feature extraction and classification. Such optional parameters include the age of the plant, phenotypical information, the type of background present in the image (natural or artificial), as well as certain keywords which can narrow down the classification.

Once the information is received on the server, plant features can be extracted and matched with the database. The GPS coordinates are used to pull up information from the geology, habitat, topography and hydrology maps. The time and date are used to extract season information. Specific features are extracted using image processing techniques, as described in the figure below. These features are used for classification, and the closest plant species, if any, are returned to the user, along with some relevant information.

Infrastructure

We are in the process of building the database of geo-tagged images, along the 3 mile nature trail at the Coal Oil Point Reserve. These images include pictures of leaves, branches, flowers, fruit and whole plants. The reservation includes roughly 130 native species, of which about 30 are common. We currently aim at obtaining images of the common species along the trail over all seasons. The images are obtained using a DSLR camera (Canon EOS 7D) together with a GPS device (i- gatU GT-120). The database is incorporated into the Bio-Image Semantic Query User Environment (BISQUE) system that the VRL maintains.

The Bisque is a web-based platform specifically designed to provide researchers with organizational and quantitative analysis tools for 5D image data. Users can extend Bisque with both data model and analysis extensions in order to adapt the system to local needs. Bisque's extensibility stems from two core concepts: flexible metadata facility and an open web-based architecture. Together these empower researchers to create, develop and share novel bioimage analysis. Bisque is web based, cross-platform and open-source. The system is also available as software-as-a-service through the Center of Bioimage Informatics at UCSB.